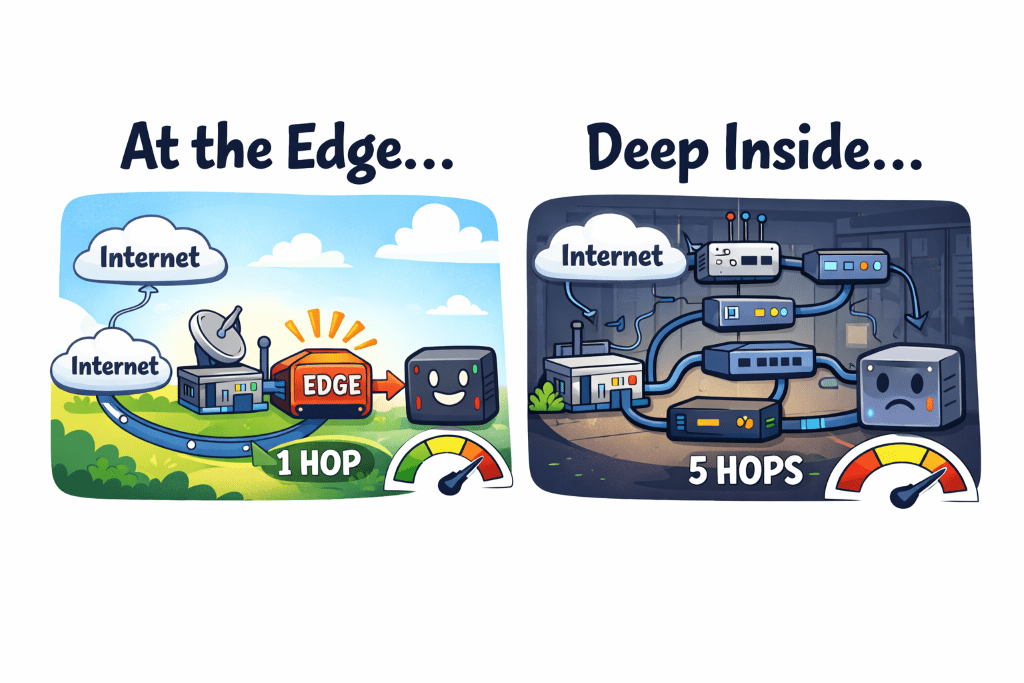

In Part 4, I moved m5proxy—my BananaPi M5 reverse proxy—off the ProCurve switch and directly onto the UCG Max. Edge service at the network edge. Clean topology. Fewer hops. Good.

What I didn't fix was the underlying problem: nginx has no idea if the servers behind it are actually alive.

The Problem With nginx (For This Use Case)

nginx is great. I'm not here to bash it. But my setup exposed one of its real limitations: DNS-based backends with no health checking.

My Kubernetes clusters are accessed via DNS round-robin. tfx-production.gerega.net resolves to multiple worker node IPs. nginx uses those IPs… once, at startup (or reload). After that, it holds them in memory. If a node goes down, nginx keeps routing to it until the next config reload. There's no "hey, this backend isn't responding" logic.

The failure mode is unpleasant: requests to the dead node time out. nginx eventually gives up and returns a 502. Your service looks down even though the cluster is fine—you're just unlucky enough to land on the dead node.

I'd lived with this because the clusters were stable. But the right fix was obvious: something that actually checks whether backends are alive.

Enter HAProxy

HAProxy solves this with three features I actually wanted:

Active health checks. TCP probe every 10 seconds per node. Two failures → node is marked down. Two recoveries → node is marked up. No human intervention, no reload required.

Dynamic DNS re-resolution. The server-template + resolvers combination means HAProxy re-queries DNS periodically. When node IPs change (cycling nodes, adding capacity), HAProxy picks it up automatically.

Stats dashboard. A real-time view of every backend: how many slots are allocated, how many are up, request rates, response times. Port 9000, built in, no Grafana required.

The Migration Plan: Parallel First, Cutover Later

I wasn't going to just swap nginx for HAProxy and hope for the best. The plan was:

- Install HAProxy, run it on port 8443 while nginx stays on 443

- Validate every single backend through HAProxy before touching nginx

- Flip ports, stop nginx, done

This is the "strangler fig" approach for proxies: the new thing runs alongside the old thing, gets tested under real conditions, and only takes over when you're confident.

Phase 1: Install and Configure

HAProxy 2.8.16 is in the Ubuntu 24.04 repos. Installation is one command.

The trickier part was certificates. nginx uses separate ssl_certificate and ssl_certificate_key directives. HAProxy wants them concatenated into a single PEM file: fullchain first, then private key.

for domain in mattgerega.net mattgerega.com mattgerega.org; do

cat /etc/letsencrypt/live/$domain/fullchain.pem \

/etc/letsencrypt/live/$domain/privkey.pem \

> /etc/haproxy/certs/$domain.pem

chmod 600 /etc/haproxy/certs/$domain.pem

doneThen a deploy hook in /etc/letsencrypt/renewal-hooks/deploy/ so certbot rebuilds the PEMs and reloads HAProxy automatically on every renewal. The hook is five lines of bash. Certbot's DNS-01 challenge via Cloudflare has zero dependency on port 80, so swapping the web server is invisible to the renewal process.

The backend config for the Kubernetes clusters uses server-template, which is HAProxy's way of saying "allocate N server slots, resolve this DNS name, and fill them dynamically":

backend be_prod_envoy

mode http

balance roundrobin

option tcp-check

server-template tfx-prod 5 tfx-production.gerega.net:30080 \

check inter 10s fall 2 rise 2 \

resolvers localns resolve-prefer ipv4 init-addr noneinit-addr none tells HAProxy not to panic if DNS doesn't resolve at startup. It'll retry. The resolvers localns block points directly at my UCG Max DNS (192.168.60.1:53), bypassing systemd-resolved entirely.

Phase 2: Validating Backends

With HAProxy running on 8443, I curled every vhost:

200 argo.mattgerega.net

302 grafana.mattgerega.net

200 cloud.mattgerega.net

200 home.mattgerega.net

...A few things showed up immediately.

The dead server. be_ha_test was showing down in the stats dashboard. This was a backend pointing at 192.168.1.115:8123—a Home Assistant test instance. 100% packet loss. Completely unreachable. The nginx config for ha-test.mattgerega.net had its IP restrictions commented out, so it was silently routing to a dead host this whole time.

nginx had no idea. It was happily accepting requests for ha-test.mattgerega.net and forwarding them into the void.

HAProxy told me in about 20 seconds of running.

The fix: remove the backend entirely. The test instance is gone for good.

The Garage backends. The Garage S3 API (port 3900) and web UI (port 3902) had no health checks at all—I'd just copied the structure from nginx without thinking. Added TCP checks to both. Garage S3 returns 403 on unauthenticated requests, and the web UI returns 404 at root, so HTTP checks would require widening the acceptable status range. TCP checks are simpler and answer the actual question: is the port open?

The IP restrictions. My config denies certain backends to everything except specific subnets—internal cluster access is LAN-only, for example. Testing from the proxy server itself (192.168.60.x, the Services VLAN) correctly returned 403 for backends that don't allow the Services network. The ACLs were firing as designed.

Phase 3: Cutover

Three changes to the config:

bind *:8443→bind *:443bind *:8444→bind *:5001(Synology DSM passthrough)- Add back the port 80 frontend for HTTP→HTTPS redirects

Then:

sudo systemctl stop nginx

sudo systemctl disable nginx

sudo systemctl reload haproxyTotal downtime: zero. HAProxy's reload is graceful—it forks a new worker with the new config while the old worker finishes its in-flight connections, then exits. No dropped requests.

Smoke test from the proxy server itself confirmed HTTPS backends responding and HTTP redirect returning 301.

What I Actually Got

Immediate visibility. The stats dashboard at :9000 shows every backend, every server slot, whether each node is up or down, and live request rates. I can see at a glance that production has 2 active nodes, internal has 4, nonprod has 3. Before, I had no idea.

Automatic failover. If a K8s worker node goes down, HAProxy stops sending it traffic within 20 seconds (2 failed checks at 10s intervals) without any intervention. nginx would have kept routing to it until I noticed and reloaded.

The dead server caught. I had a backend pointing at a dead host for an unknown amount of time. Only found it because HAProxy's health check turned it red immediately.

Dynamic node discovery. DNS re-resolution means adding a worker node to the cluster automatically makes it available to HAProxy. Remove a node gracefully, and it disappears from DNS, then from HAProxy's active pool.

The Part That Surprised Me

I expected the migration to be technically interesting but operationally boring. It was the opposite.

The most valuable thing wasn't the health checking or the stats dashboard. It was running the parallel validation and discovering that ha-test.mattgerega.net had been routing to a dead host for who knows how long. nginx had never complained. Every request to that vhost was just… silently failing.

That's the real argument for active health checking: it doesn't just prevent future failures. It surfaces the failures that are already happening that you don't know about yet.

What's Next

Phase 4 of the HAProxy migration plan covers routing K8s API server traffic (port 6443) through HAProxy via TCP passthrough with SNI-based cluster routing. Right now, kubeconfigs point directly at control plane nodes. Routing through HAProxy means active health checking for the API server too—if a control plane node goes down, kubectl automatically routes to a healthy one.

That involves certificate SANs, RKE2 certificate rotation, and the usual amount of "this should be simple" complexity. Future post.

Part 5 of the home network rebuild series. Read Part 4: Network Hops and Reverse Proxy Placement